Google introduces Nested Learning: A new ML paradigm for continual learning

Google introduces Nested Learning, an innovative machine-learning strategy developed by Google Research that reframes how AI models are built and trained. Delivered through a blog post by the team, this advancement describes a model architecture structured as a series of nested optimization problems, each with its own context-flow and update frequency — designed to improve long-context reasoning and continual learning.

Why Nested Learning matters for AI’s evolution

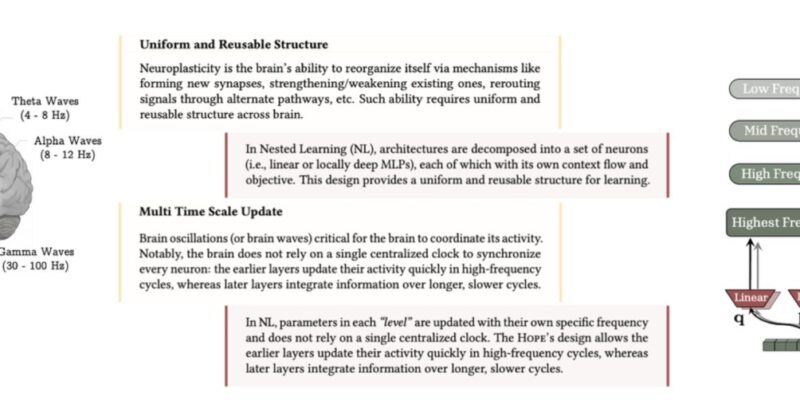

In traditional deep-learning frameworks, model architectures and training algorithms have been treated separately — the structure of the model on one hand, and the learning rule on the other. Google’s Nested Learning flips that paradigm by seeing both as levels of optimization inside one unified system. Each level has its own objective and update cadence, helping mitigate the “catastrophic forgetting” problem — where new learning erases prior capabilities.

Furthermore, building in support for long-context processing is essential as models attempt to reason over thousands or even millions of tokens, documents or multimodal inputs. Nested Learning introduces what Google calls a “continuum memory system” — a hierarchy where different memory modules update at different frequencies, creating a rich multilayer memory architecture.

How Nested Learning works in practice

According to Google’s blog, the Nested Learning paradigm treats a complex model as a composition of smaller, interconnected optimization tasks. Each has its “context flow” — the stream of information it processes — and its own update rate. For example, an outer level might update weekly on large batches of data, while an inner level updates in real time on new prompts or context windows.

Google tested this paradigm with a proof-of-concept architecture named “Hope”. Hope uses these nested modules to support extended context windows and aims to self-modify and improve over time. In early experiments, it demonstrated improved performance on long-context reasoning tasks compared to standard transformer models.

What this means for long-context and continual learning

With Google introduces Nested Learning, one of the major implications is that AI systems could better retain older knowledge while learning new tasks — a common limitation of current large models. The paradigm aims to bridge short-term in-context learning (immediate prompt responses) with long-term memory and model evolution.

Long-context capability is increasingly important: as applications require models to handle full documents, conversations or multimodal streams, memory and reasoning across vast sequences becomes a bottleneck. Nested Learning’s multi-timescale memory modules directly address that challenge.

Potential challenges and implementation considerations

While Google introduces Nested Learning marks a significant research milestone, real-world adoption will require refinement. The architecture’s complexity — coordinating multiple nested optimizers and memory modules — poses training and infrastructure demands. Also, scaling models that maintain multiple frequencies of updates and layers of memory may incur high computational costs.

Moreover, field-testing in production environments will determine how these nested systems fare in robustness, efficiency, and generalization compared to existing architectures that rely on simpler, monolithic training pipelines.

Looking ahead

As Google introduces Nested Learning, the broader AI community gains a new blueprint for continual and long-context learning. If adopted widely, this paradigm could reshape how models evolve over time — shifting from static training cycles to dynamic, self-improving systems.

Developers, researchers and organizations will be watching closely how models designed under this paradigm perform in production — particularly in settings demanding sustained reasoning, memory over extended sequences, and adaptability to new tasks without forgetting previous ones.